[Satanism and Wicca] are to world religions what JavaScript is to programming languages. -- Juli MallettHappy new year!

...Debugging-by-printf is a universal theme that transcends cultures and concrete syntaxes. This shit is Joseph Campbell, yo. -- Jason Reed

...real programmers often wear climbing boots to work in case a mountain should suddenly spring up in the middle of the machine room. -- anonymous

Friday, December 31, 2010

Nice quotations

Wednesday, December 29, 2010

PL Predictions for 2011

- JSON will be the default format for new internet APIs.

- As more people use JSON, we'll see a XML renaissance, as we - for the first time - discover that XML actually gets some things right. No really.

- GHC will get typed type-level programming and end this highly embarrassing state of untypedness. ;)

- We'll have a verified compiler-and-OS toolchain for compiling and running some kinds of apps. It won't be x86-based.

- All kinds of stuff targetting JavaScript.

- Split stacks and maybe some scheduler improvements will be shown competitive with green threads in the millions-of-threads!!1! (anti-)scenario.

Sunday, December 19, 2010

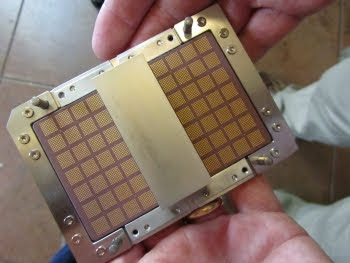

Quick, what does this machine do?

From Dieter Rams, ten principles for good design.

HXA just published A comment adding to Dijkstra on natural language programming:

Software must always be an ‘un-natural language’ because its (ultimate, essential) purpose is different (and particular): it is not communication, it is design. ...I'll have to come back to this topic at another time, for now take it as a fine weekend inspiration.

When you look at software, what you see is not a language, it is a machine.

[Update: Of course, Lev Manovich comes to mind immediately. From his Info-aesthetics:

Never before a single machine was an engine of economy -- AND the main tool for representation.

[Further riffing off on this idea reveals that our major platform for delivering software is called a (markup) language.]]

Thursday, December 16, 2010

On Git

A much simpler view IMO is to consider the Git repositories as narratives, or meta-narratives, each committer being seen as an author of one or more. This is made particularly clear in the git man pages, where a predominant concept is the distinction between closing and opening — in a sense, it promotes the use of structural deappropriation to challenge the linear view of “revisions”. As Torvalds says, “[l]inearity is fundamentally impossible”, so the repository — or at least, a branch — is interpolated into a realism that includes the “current” revision (tip or head, as you prefer) as a reality. To put it in political terms, Mercurial deconstructs Marxist socialism, while Git analyses capitalist construction.

Tuesday, December 14, 2010

HTTP request visualization

You can clearly see the handshake, slow-start ramp-up and full bandwidth phases.Packet Flight: HTTP request @ 40X from Carlos Bueno on Vimeo. (via Russ Cox)

Saturday, December 4, 2010

objectophilia alert!

Plan 9 is in the works. This is a nice test and development platform

for software. We're going to make the design available in a way that

people can easily build their own.

Wednesday, December 1, 2010

User space? Almost certainly not.

> Should this kind of thing be done in user space?(from LWN)

Almost certainly not.

First off, user-space is a fragmented mess. Just from a "let's get it

done" angle, it just doesn't work. There are lots of different thing

that create new tty's, and you can't have them all fixed. Plus it

would be _way_ more code in user space than it is in kernel space.

Secondly, user-space daemons are a total mess. We've tried it many

many times, and every time the _intention_ is to make things simpler

to debug and deploy. And it almost never is. The interfaces end up

being too painful, and the "part of the code is in kernel space, part

of it is in user space" means that things just break all the time.

Finally, the whole "user space is more flexible" is just a lie. It

simply doesn't end up being true. It will be _harder_ to configure

some user-space daemon than it is to just set a flag in /sys or

whatever. The "flexibility" tends to be more a flexibility to get

things wrong than any actual advantage.

Just look at the patch in question. It's simple, it's clean, and it

"just works". Doing the same thing in user space? It would be a total

nightmare, and exactly _because_ it would be a total nightmare, the

code would never be that simple or clean.

Linus

Ghosts of Unix Past

Nulla Salus Extra Ecclesiam

There is always a well-known solution to every human problem - neat, plausible, and wrong. — H. L. MenckenIn software design, there often appears to be a situation where you either get a concept exactly right, or it will just be plain wrong and mess up your entire downstream development. (Maybe sometimes there are multiple good ways to solve a problem, instead of just one, but the wrong ones will always outnumber them.)

I think a good example is the difference in lexical scoping in Scheme vs Python. For my tastes, Scheme gets it exactly right, and harvests a lot of benefits from its clean design, whereas Python is just plain wrong, and harvests a lot of pain, everywhere. [In my limited experience.]

How can we avoid making systemic design errors? One way is to stick to what works. If you're designing a new PL, and your lexical scoping is different from Scheme or Haskell, I think you have a lot of explaining to do.

To know what actually works, you need taste. To create new designs without systemic error, you need a lot of midnight oil.

Which brings us back to:

Why do you glorify doing something new and stupid, when doing good things well is what people really should be admiring. — Linus Torvalds

Tuesday, November 30, 2010

Systems programmers relax, most advice isn't for you

Thus, programming advice of the DTSTTCPW variety (i.e. 99% of programming advice) always makes me a bit uneasy. Instead of thinking - shouldn't I just be crankin' out code, putting it online, and blogging about it on LJ?

But a couple of days ago I made an observation: almost all programming advice applies to applications, but what I'm mostly working on are systems. Since then, I'm sleeping better.

As Dr. Theodor Holm Nelson says, you can't get to the moon by piling up chairs. You may be able to learn something about an application by cranking out a quick and dirty prototype. But for systems, prototyping seems futile. Either your ideas work, or they don't, and a quick gedankenexperiment will usually be enough to find out.

Of course, I don't want to denounce experimentation as worthless. You have to know whether your ideas will be useful and work on really existing computers. But if your system is so removed from reality or complex that you're unsure about that, you're probably doing it wrong.

Take Unix pipes. There's an early memo about the need for pipes from 1964. And in 1972, pipes appeared in Unix. Do you think they spent 8 years DTSTTCPWing? I don't think so. I think that in these 8 years they spent a lot of quality time thinking about the issue, and when they had the right idea, they just knew it was right, and went ahead and implemented it.

So, to all systems programmers out there: most programming advice isn't for you. Relax, and take the time to find the right ideas.

Thursday, November 18, 2010

Numbers Everybody Should Know

| L1 cache reference | 0.5 ns |

| Branch mispredict | 5 ns |

| L2 cache reference | 7 ns |

| Mutex lock/unlock | 25 ns |

| Main memory reference | 100 ns |

| Compress 1K bytes w/ cheap algorithm | 3,000 ns |

| Send 2K bytes over 1 Gbps network | 20,000 ns |

| Read 1 MB sequentially from memory | 250,000 ns |

| Round trip within same datacenter | 500,000 ns |

| Disk seek | 10,000,000 ns |

| Read 1 MB sequentially from disk | 20,000,000 ns |

| Send packet CA->Netherlands->CA | 150,000,000 ns |

For more details: Agner's Software optimization resources.

Foundations for Structured Programming with GADTs

GADTs are at the cutting edge of functional programming and become more widely used every day. Nevertheless, the semantic foundations underlying GADTs are not well understood. In this paper we solve this problem by showing that the standard theory of datatypes as carriers of initial algebras of functors can be extended from algebraic and nested data types to GADTs. We then use this observation to derive an initial algebra semantics for GADTs, thus ensuring that all of the accumulated knowledge about initial algebras can be brought to bear on them. Next, we use our initial algebra semantics for GADTs to derive expressive and principled tools -- analogous to the well-known and widely-used ones for algebraic and nested data types -- for reasoning about, programming with, and improving the performance of programs involving, GADTs; we christen such a collection of tools for a GADT an initial algebra package. Along the way, we give a constructive demonstration that every GADT can be reduced to one which uses only the equality GADT and existential quantification. Although other such reductions exist in the literature, ours is entirely local, is independent of any particular syntactic presentation of GADTs, and can be implemented in the host language, rather than existing solely as a metatheoretical artifact. The main technical ideas underlying our approach are (i) to modify the notion of a higher-order functor so that GADTs can be seen as carriers of initial algebras of higher-order functors, and (ii) to use left Kan extensions to trade arbitrary GADTs for simpler-but-equivalent ones for which initial algebra semantics can be derived.Mentioned by Oleg in GADTs in OCaml.

Wednesday, November 17, 2010

Boltzmann Samplers for the Random Generation of Combinatorial Structures

From the paper Boltzmann Samplers for the Random Generation of Combinatorial Structures.

While we're at it, Z-order space-filling curves are hot:

In 3D:

Why are objects so unintuitive

All of the mappings we have so far from real-world problems to software realizations are either bad (in the sense of imprecise and convoluted) mappings or map to pretty bad models (in the sense that the models are either devoid of semantics or have semantics too complex for the working programmer to really understand). OO "works" because it is the least-bad and least-incomprehensible mapping that has been identified to date.The thread is also notable for David Barbour's treatise Being poor at modeling is essential to OOP:

The poor modeling facilities of OOP are caused by essential properties of OOP. These essential properties include encapsulation and message-passing polymorphism. These are the same properties that make OOP powerful for configurable modularity, scalability, and object capability security. ...I do not believe that modeling 'tax reports' in OOP is something that we would 'want' to do. Ever. We might need a tax-report value object as an artifact of the dataflow system (e.g. if tax-reports are dumped into the system as complex form objects), but that's just plain-old-data and could be done just as easily in functional or procedural, and is really not 'OOP'. ...

Your comments (modeling the situation, wanting to model the 'tax reports' explicitly even when it is unnecessary, etc.) and those like them (being extremely prevalent in OO books and the education), are what lead people to start each program by writing a business simulator when what they want are business data processors.

Thursday, November 11, 2010

Dependent types linkdump

Here's a list of some papers I've found worthwhile or just found, and not read yet...

Fun with Type Functions (slides). Oleg Kiselyov, Simon Peyton Jones, Chung-chieh Shan. 2010.

How to fake it with Haskell's recently added type families. But Haskell increasingly looks horribly impure: it's not just that every type's inhabited by bottom, type-level programming is untyped! I think they really should work on their purity! ;) Mentions Ωmega's kind system - my prediction: typed type-level programming is coming to Haskell soon.Proof-assistants using dependent type systems. Henk Barendregt, Herman Geuvers. 2001.

Recommended as a great intro to all things dependent typing. Not by me though, as I still have to read it.ΠΣ: Dependent Types without the Sugar. Thorsten Altenkirch, Nils Anders Danielsson, Andres Löh, Nicolas Oury. 2009ish.

What's under the sugary hood of dependent programming languages. For when I develop a dependent language - because I'll have to.

- A Tutorial Implementation of a Dependently Typed Lambda Calculus. Andres Löh, Conor McBride and Wouter Swierstra. 2009.

Also frequently mentioned as an intro to dependent types.Verified Programming in GURU. Aaron Stump. 2009.

A book on dependent programming with a focus on verification.Generic Programming with Dependent Types (part II, part III). Stephanie Weirich. 2010.

Slides focussing on the extra expressivity afforded by dependent types. Agda.An Epigram Implementation. Edwin Brady, James Chapman, Pierre-Evariste Dagand, Adam Gundry, Conor McBride, Peter Morris, Ulf Norell, Nicolas Oury. 2010.

The 300-page literate Epigram (2?) implementation.

Sunday, October 31, 2010

Concurrency as Basis for Scalable Parallelism

It changed my thinking: from "ouch, this is a lot of data to process" to "wow, lots of opportunities for concurrency, and thus parallelism".It has been observed that many 'concurrent' applications fail to scale beyond a few parallel components. In many cases, some sort of data-parallelism is feasible (e.g. parmap, SIMD, GPU) and we should certainly be able to leverage those opportunities! But I'd like to address just the concurrency aspect - the coordination of diverse systems and interests - and argue that even that is a sufficient basis for scalable parallelism, assuming we leverage it properly.

The scalability of concurrency as a basis for parallelism comes in realizing that the number of relationships in a system can rise O(N^2) with the number of components. Thus, we need to embed most significant computation into the relationships, rather than the components. ...

When most computation is moved to the relationships, the resources used by a service will scale commensurately with the number of clients it directly services - and vice versa; resources used by a client will be commensurate with the number of services it directly uses.

Monday, October 25, 2010

Notes on Dependent Types

A couple of systems I've (superficially, so far) looked at are Ωmega, Epigram, Coq, and Agda.

Ωmega is closest to existing FP languages like Haskell, which makes it easier to grok than the others for newbies - it's not a full-spectrum dependent type system by design. Technically speaking, Ωmega is "faking it" by maintaining a strict phase distinction between the value and the type level (and the kind level ...). Proofs are represented as runtime instances of GADT datatypes.

GADTs, or generalized algebraic datatypes, are a more powerful (generalized) form of ordinary ADTs, such as ML's 'a list, that are used as datatypes (or "object system") in many dependently typed systems. GADTs are very closely related to generics in Java and C#; understanding generics and their proposed higher-level extensions thus provides a gentle route into dependent types.Epigram is programmatically aligned with Ωmega - both are passionate about the advances in programming made possible by dependent types, and vocally call programmers and researchers to join them on their quest. Epigram, in contrast to Ωmega, goes whole hog dependent typing. This includes arbitrary type-level computation, a succinct 2D-layouted syntax, and interactive support by the development environment, that goes beyond what IDEs for ordinary languages offer (and can offer).

Java and C#'s object systems already have all of the power of GADTs, only they're not as easy to use, and they are not as typesafe. What separates GADTs from ADTs can be seen in this Java code:

class A<T> {}

class B extends A<Foo> {}

B extends A with T fixed to Foo, so instances of B have type A<Foo>, by subtyping. With ordinary ADTs, B would also need to be parameterized by T - individual type constructors of an ADT, which we've expressed here as Java classes, cannot fix a type parameter to a specific type. GADT is attractive because it's a modest extension of existing ADT theory, and yet enables a wide range of data structuring styles used in fancypants typeful programming.

Coq is different from Ωmega and Epigram in that it's designed as a proof assistant, and not a dependent programming language. Adam Chlipala maintains that Coq is the most useful implementation to date for expressing complex proofs, and that systems like Epigram are more useful when proofs arise very naturally from the structure of the program and/or its data. Coq programs, such as Xavier Leroy's verified C compiler, have a different flavor from ordinary and dependent functional programs. Concoqtion has an O'Caml value level, and a Coq type level.

One problem with all dependently typed systems is that you have to deal with non-terminating type-level programs somehow, otherwise your logic becomes unsound. There are different strategies: Ωmega type-level programs are written as term rewriting systems, that have to terminate; Epigram and Coq programs are written in total languages - every program terminates; in Agda, programs are interrupted when they get stuck in a loop - soundness is regained through a separate termination check (I think). In practice, nontermination doesn't seem to be a big problem.

Some of the most interesting applications of dependent types are in the area of metaprogramming by program generation: you prove interesting static properties of your program, and then spit out a program in another language that is guaranteed to obey them dynamically. Which means that you can throw away and erase a lot of stuff at compile-time, that other languages have to remember and/or check at runtime. As Epigram implementers state, they have more information available about their programs statically, than they know what to do with. Consider: in a dependent language, once a program typechecks, you may not ever need to run it!

The existing systems for doing dependent programming all seem very closely related, and "conceptual epiphanies" can be translated between them. Dependent types are an amazing area of research & development, and I urge you to join it.

Update:

Stephanie Weirich maintains that dependent types not only aid verification, but they also increase expressiveness of a language, and that generic programming is a killer-app for dependently-typed languages.

Also: what are dependent types actually good for? I think a good example is found in Why Dependent Types Matter: it shows how to write a list-sorting function, whose type proves that the output lists are always correctly sorted. I think a lot of software will be written this way in the near future.

Wednesday, October 20, 2010

Keeping up with the GNUses

- Michael Kerrisk's Linux man-pages

- Nick Clifton's GNU Toolchain Updates

Tuesday, October 19, 2010

Split stacks!

Monday, October 11, 2010

Monday, September 27, 2010

Program Your Size

But minimalism in programming may cause programs to appear cramped, may persuade you to choose forms of expression that are unneededly terse, and may also lead to programs that are hard to refactor or extend.

Point is, when you start programming, you don't know how big the program's gonna be, so starting with minimalism may be a bad choice. When looking at the sources of some huge programs, I found that some of them have found very relaxed ways to deal with their sprawling size. Gnus for example comprises dozens of large source files, containing hundreds or thousands of operational definitions, and yet remains very readable.

I don't want to get into the details of architecting large programs - I'd rather like to focus on a way to write programs, from the start, so that the source then has a "density" that's adequate to its size. Gnus for example is meandering, and that's perfectly adequate - Gnus being a mail reader for the most customizable OS in existence. That's a job that's never done, so the source should reflect that - you can't write a cute blog post about a program like Gnus, there's no single overarching, minimalist design principle.

Maybe, as Perlis said, every program needs to be written at least twice. After the first run, we know the approximate size of the program, and can then find a code density/terseness that's adequate to that size.

I find minimalism in source code is often limiting. In my next program, I'll write it from the start as if it were really huge.

Linus on transactional memory

So that's what it boils down to: transactions are "free" and a wonderful way to elide those horrible expensive locks.

But only if you never make a mistake.

They are expensive as hell even for very low rates of transaction failures. And you really cannot know statically (even if you don't end up reaching some transaction limit, you may easily end up just having heavy contention on the data structures in question).

So I claim that anybody who does transactional memory without having a very good dynamic fallback is basically totally incompetent. And so far I haven't seen anything that convinces me that competence even exists in this area.

Monday, September 20, 2010

The ghosts of blog posts past

This is a blog. It is a special medium. I'm not writing articles, which I expect to stand the test of time. I don't let my friends preview the posts I write, and point out their problems. Because I think there's a difference between writing articles (as, say, Paul Graham does) and blogging. And both have merits. This is a blog.

It presents my opinions and mood at a specific point in time. If you don't like it, argue with me, but don't take it too seriously. Take my posts like snarky or humorous remarks over a beer. That's how I write them. Because that's what I think is one of the things blogs are good for, and that's how I'm running this particular blog.

-- Manuel

Monday, September 13, 2010

The far side of the lambda cube

Reading a bit about this I found some interesting links:

Sage: Unified Hybrid Checking for First-Class Types, General Refinement Types, and Dynamic. A recent paper I found via Ahmed, Findler, Siek, and Wadler's new Blame for All. I find the use of a counter-example database in a compiler a bit frightening, though.

Henk: A Typed Intermediate Language, from 1997, by SPJ and Erik Meijer:

There is growing interest in the use of richly-typed intermediate languages in sophisticated compilers for higher-order, typed source languages. These intermediate languages are typically stratified, involving terms, types, and kinds. As the sophistication of the type system increases, these three levels begin to look more and more similar, so an attractive approach is to use a single syntax, and a single data type in the compiler, to represent all three.Languages of the Future, by Tim Sheard on Omega, on which I'll post something soon.

The theory of so-called pure type systems makes precisely such an identification. This paper describes Henk, a new typed intermediate language based closely on a particular pure type system, the lambda cube. On the way we give a tutorial introduction to the lambda cube.

And, Your lambda-cube is puny.

A moment of respectful silence, please.

Sunday, September 12, 2010

Higher kinds are sexier

And it turns out, the theory behind that looks simpler, and thus sexier:

(types) T ::= (K K*)I like the definition of types: a type's the application of a constructor to zero or more other constructors. Constructors may be variables (X), classes (C), or functions taking zero or more parameters and returning a type. (So you basically have a lambda calculus at the type-level.) A type parameter is a variable constructor with zero or more parameters, optionally bounded to a class type with zero or more type arguments. Class definitions are parameterized over zero or more type parameters, and extend zero or more existing classes. (I'm taking some liberties with adding multiple inheritance, hoping it doesn't mess up the theory. :P) Method definitions are, as usual, parameterized on the type- and term-level and have a result type.

(type constructors) K ::= X | C | (P* => T)

(type param defs) P ::= (X P*) | (<: (X P*) (C K*))

(class defs) D ::= DEFCLASS (C P*) ((C K*)*)

(method defs) M ::= DEFMETHOD (m P*) ((param T)* -> T)

It's kinda embarrassing to post this stuff here, since I'm so bad at type theory, but I think I can make it work. One insight giving me this faith is that none of this stuff survives till runtime: at runtime, only concrete instantiations, such as List

Friday, September 10, 2010

Formalizing generics

I've now made some progress in formalizing this, based on Featherweight Generic Java. Here's a basic overview of the type system in the FGJ style:

(types) T ::= C | X | TopTypes are either class types (C), type parameters (X), or Top. Class types have a name (klass) and zero or more types as parameters. A class definition introduces a named class, parameterized over zero or more type parameters that have class types as bounds, zero or more superclass types, and zero or more named and typed slots. A method definition introduces a named method, parameterized over zero or more type parameters that have class types as bounds, with zero or more named and typed parameters and a result type.

(classes) C ::= (klass T*)

(class defs) D ::= DEFCLASS (klass (<: X C)*) (C*) ((slot T)*)

(method defs) M ::= DEFMETHOD (method (<: X C)*) ((param T)* -> T)

Type parameters in a class definition or method definition (X) are scoped over the entire definition, including the bounds (in the style of F-bounded polymorphism).

All of this is pretty much equivalent to FGJ. The only difference is that classes do not contain methods.

[Updates: I've added type parameterization to method definitions. Added Top. Added some parentheses to make the syntax unambiguous.]

Wednesday, September 8, 2010

Minimal generics for an untyped language

Generics, or parametric polymorphism, are jolly useful, even on a totally limited scale: all I want from the first round of this endeavor is to support something like the following:

(defclass (list T))

(defmethod add ((list (list T)) (element T))

...)

Here, I define the class (list T) - a list parameterized over the type of its elements, T. That's the same as Java's List<T>. The method ADD takes such a list and an element, and adds it to the list.

To create an instance of a parameterized class, you have to pass a concrete type argument to use for the element type, as in:

(defvar *my-list* (make (list string)))

This creates a list that accepts strings (and instances of subclasses of string) as elements.

The instance has to remember that it's a list of strings for type safety. When ADD is called on a list, the runtime needs to check that the element type matches:

(add *my-list* "foo") ;; OK

(add *my-list* 12) ;; runtime error

One more thing I'd also like is the following;

(defclass (foo (<: X bar)))

That's a class foo that has one parameter, that must be a subtype of bar, analogous to Java's Foo<X extends Bar>. Being able to give such a bound to a type parameter seems quite essential.

Furthermore, it has to be possible to pass on type parameters to superclasses, as in:

(defclass (super-1 T))

(defclass (super-2 T))

(defclass (klass X Y) ((super-1 X) (super-2 Y)))

(klass X Y) is parameterized over two types, X and Y, and passes them on to its two superclasses, each of which is parameterized over one type.

Of course, it also has to be possible to remove parameterization, as in:

(defclass string-list ((list string)))

This defines an unparameterized class string-list, which is a subclass of (list string).

Another requirement is that it has to be possible to create instances of type arguments, as in:

(defclass (klass T))

(defmethod make-a-new-t ((k (klass T)))

(make T))

That's just cute, and may have some applications to dependency injection.

I'd also like to be able to write polymorphic functions:

(defun identity ((a T) -> T) a)

The arrow indicates the result type of the function. In this case it takes an instance of any type and returns it.

It should also be possible to give slots (member variables) of classes the types of type parameters:

(defclass (klass X Y) ()

((slot-1 X)

(slot-2 Y)))

This defines a class with two type parameters X and Y, (no superclasses,) and two slots slot-1 and slot-2 with the types X and Y, respectively.

These are the basic requirements. Now I need a plan! ;)

Tuesday, September 7, 2010

Fast dynamic casting

We have demonstrated that it is possible for a linker to generate integer type IDs for classes such that it may be verified by a simple integer modulo computation that one class derives from another in an object-oriented language. When combined with a suitable way of adjusting offsets, this method provides for a fast, constant-time dynamic casting algorithm. A 64-bit type ID is capable of representing quite large class hierarchies containing thousands of classes and at least nine levels deep.I knew that prime numbers could be used for this somehow, but these guys have already worked it out.

Relatedly, I've also been thinking about using perfect hashing to implement multiple dispatch. It should work just fine, and actually reduce the number of type tests, compared to single dispatch. Unforch, I'm still in the make-it-work phase, which is followed by the make-it-correct phase. Only then does the glorious make-it-fast phase start.

Monday, September 6, 2010

Programmer feel-good quote

From a bit to a few hundred megabytes, from a microsecond to a half an hour of computing confronts us with completely baffling ratio of 109! The programmer is in the unique position that his is the only discipline and profession in which such a gigantic ratio, which totally baffles our imagination, has to be bridged by a single technology. He has to be able to think in terms of conceptual hierarchies that are much deeper than a single mind ever needed to face before. — E.W. Dijkstra

Sunday, September 5, 2010

Saturday, September 4, 2010

The many forms of polymorphism

Here are some forms of polymorphism, from Types and Polymorphism in Persistent Programming Systems by R.C.H. Connor:

ad hoc: An operation is defined over a number of different types, and its semantics may depend upon the type of its operands. An example is the operator "+", which may be defined over integers and reals, and has a different interpretation for each type.He further writes:

parametric: Instances of the same type within a type description may be abstracted over by an implicit or explicit type parameter. An example is an identity function, where although the parameter and result may be of any type, they are statically known to be the same type.

inclusion: The type of a value may be partially abstracted over, so that unnecessary type information need not be stated. An example is a function which is defined over any record value which has an age field of type integer.

All of these language concepts have evolved independently from each other. Languages such as early assemblers contained no polymorphism whatsoever. Ad hoc polymorphism was the first to appear, in languages such as Fortran, which defined overloaded operators, such as "+", along with the ability to coerce values from integer to real according to the use of such operators. Parametric polymorphism appeared in ML, and inclusion polymorphism in Simula. Existentially quantified types as described by Mitchell and Plotkin is a model of abstract data types which introduces abstraction over a type description, and such types are included in the definition of parametric polymorphism given above.

The motivation for all of these diverse language models is the same: the need to abstract over type. As type systems include more static constraints, then flexibility is lost as well as safety gained. Some of this flexibility, however, may be regained without the loss of safety by the introduction of type abstraction. Polymorphism is viewed here not as some theoretical attribute of type systems but as a solution to a class of practical problems which require type abstraction.

Friday, September 3, 2010

From Choosing VMs to Feng Shui for HCI

Sean McDirmid:

I'm sick and tired of PL papers that present a novel language idea and then justify it with...a formal model...huh? This is a logical fallacy of the shaggy dog variety.David Barbour:

developing a new language is also nigh completely pointless unless there is something useful and interesting you can say about it. ...Matt Hellige:

Usage doesn't justify a design. Valid technical design seems to be a relatively minor factor in market success. ...

most attempts to achieve 'ease-of-use' and 'ergonomics' involve guidance by reasoned principles... a sort of Feng Shui for HCI (which holistically includes PL).

Programming languages are tools that require significant investment of time to learn to use well. To a large degree, their value is measured in terms of how valuable they are to the people who know them best.

Wednesday, August 25, 2010

No more "minimal early Lisps", pulleezz

Lisp is a bad model if you want some kind of axioms of computing

We already have the lambda calculus for that, and you can build a real language with that (see Haskell). If you want to write some minimal thingy in C, consider writing a Haskell, not a Lisp.

That you can write a Lisp evaluator in Lisp was interesting in 1959 (and maybe 1960), and let's face it, that Ur-Lisp was one broken language.

Car, cdr, wtf?

Contents of the address part of register, contents of the decrement part of register, what the fuck? What are they doing in a language, even a toy, written in 2010? Please use data structures.

Where are the closures?

If you're looking for a challenge, as opposed to redoing something that's been done ad meam nauseam for half a century fachrissakes, try to find a minimal and explainable way to do closures. Bonus points for efficient flat environments. Languages without closures are so 1959.

Where are the macros?

A Lisp without macros is meaningless.

Where's the control flow?

A Lisp without some kind of continuations and condition system is useless.

Where are the semantics?

If you read the RnRS attentively, you'll see that Lisp has evolved a deep and subtle set of semantic concepts, none of which feature in the Ur-Lisp.

What's the purpose?

Surely you're not learning much by repeating JMC's flawed Ur-Lisp from 1959. If you want to learn something, implement a language with closures and macros. If you want to learn more, make it a compiler. If you want to blow your head, implement hygienic macros or a higher-order module system or a static type checker. That's today's standard.

Look, Lisp is such a great language, but if anything we have to push it harder, not continuously go back to 1959.

Update: More fully-featured, modern Lisps, pulleezz

Saturday, August 21, 2010

Dalvik DEX

Dalvik's DEX format is another nice one.

Interestingly, Dalvik is the brainchild of Dan Bornstein, who was at Kaleida Labs (RIP), where he worked on ScriptX, one of the many avant-garde codes that fell victim to the WWW-induced ice age of GUI innovation.

Friday, August 20, 2010

The 2010 Linux Storage and Filesystem Summit

On testing:

It was suggested that the real test should be "put the new code on the Google cluster and see if the Internet breaks."

On Google:

There are two fundamental types of workload at Google. "Shared" workloads work like classic mainframe batch jobs, contending for resources while the system tries to isolate them from each other. "Dedicated workloads" are the ones which actually make money for Google - indexing, searching, and such - and are very sensitive to performance degradation. ...

The workloads exhibit a lot of big, sequential writes and smaller, random reads. Disk I/O latencies matter a lot for dedicated workloads; 15ms latencies can cause phone calls to the development group.

Thursday, August 19, 2010

Meta: Blogging is difficult

Both of these blogs have gone through the same stages:

- A few days of writing in solitude with a couple of friends.

- Chris Neukirchen mentions the blog on Anarchaia or Trivium.

- Al3x twitters about it.

- Attack of the unwashed HN masses (just kidding).

I'd like to keep a certain entertaining and informative niveau, and in the best case, I'd also like to improve it. In the early days, aggressive diss-posts and funny flames flow freely, because the audience is small and trusted. And those posts are entertaining. But in a more public setting, I have a bit of a bad feeling writing them, because I feel they may harm people I write about, when all I'm intending is to vent about some ideas I think are bad or ridiculous, or would like to tell a stupid joke.

So, what I want to say is that there are some difficulties to blogging that are seldom written about, and I'm still trying to figure out the boundaries of this strange new thingy, and where to draw the line between fact and fiction in blogging.

--Manuel

Monday, August 16, 2010

No Paranoia Rule

The first time I heard of what I now call the No Paranoia Rule was in the following comment by Luke Gorrie on LtU:

"Oh my, what if Luke installed an exception handler that ROT13 encoded every string on the heap, then how would Jane debug her programs?"I hear that early on, people opposed subroutines for similar reasons. And of course, macros are frequently criticised for their potential of wreaking havoc.

But that's where the No Paranoia Rule comes into play. Stop being paranoid, and don't discount language features for their potentially devastating effect.

As Luke further states,

This [being paranoid] is not the way to illumination.(Clemens A. Szyperski coined the term No Paranoia Rule.)

Saturday, August 7, 2010

You know you're reading LtU when...

I believe the preferable solution is typeclasses as record types, instances as records, scoped implicit parameters for propagating them around, associated types as record members, and a backtracking logic solver for instantiation. But that just solves the immediate problem; on top of that I'd like a dependent type system with staging to enable type dependency on values (e.g. arrays-with-length), and a higher-order logic solver so simple logic-programming idioms like let zip(as,bs)=f(x) in ... can be expressed and translated via instantiation into efficient runtime code. And a total (non-partial) function subset of the language for reasoning with proofs as programs. — Tim Sweeney

Monday, August 2, 2010

Three Principles of Lisp

Liberal use of syntactic abstraction

Lispers don't fret over when to build a domain-specific language (DSL) or not. When it makes sense, they build one, when it doesn't, they don't. Thanks to the trivial syntax, and the long experience with tools like pattern matching and quasiquotation, DSLs in Lisp are created rapidly.

In other languages, the creation of new language constructs or new languages requires countless hours of busy work. In Lisp, DSLs can be ready to use after a couple of lines entered at the REPL. In Lisp, creating DSLs is a no-brainer, and I think that's one of the cornerstones of Lisp.

Lisp isn't doctrinaire about creating DSLs - in fact it is standard advice to only use macros when plain functions won't do it anymore. But because creating DSLs in Lisp so easy, they are created whenever they make sense. Which, as it turns out, is a lot of times. I would go so far as to say that the liberal use syntactic abstraction makes Lisp a boilerplate-free language, but that's another topic.

There's no such thing as a constant mentality

Lisp thrives on interactivity. Newer languages like Haskell are encroaching on the natural habitat of Lisp (and surpass it some areas), but none of them can match Lisp when it comes to no holds barred, nitty gritty interactivity. Even defconstant isn't necessarily constant, and for interactive systems (Emacs!) that's exactly what you want. In an interactive system, you can't tolerate constancy - this would be like using Lego blocks that are glued together.

I think a fundamental insight for understanding Lisp's superiority in the interactive domain is that Lisp is an asynchronous language, in a very peculiar sense: any Lisp expression may be entered at any time. In many cases, you could cut up a Lisp source file at top-level expression boundaries, rearrange them, and the resulting source file would still have exactly the same effects as the original file when loaded into a Lisp. (Just as one example, in Common Lisp it's possible to create subclasses of classes that don't exist yet.)

This is simply a natural consequence of making the read-eval-print loop (REPL) the cornerstone of Lisp semantics. In C, the dominant paradigm is main(), in Lisp it's (repl). And the top-level is tricky, some say hopeless. In Lisp, it is fundamental to always be able to define a function FOO that calls an as-yet undefined other top-level function BAR - a fundamental idiom of interactivity:

(defun foo ()Toolchain approach

(bar))

Lispers don't need to create a new language to try out new language design ideas. Many times, new languages can be defined as macros. If they don't, and an interpreter or compiler is needed, it can still stand on the shoulders of Lisp. In the Lisp world, new languages are built buy combining large, battle-tested building blocks, and polishing or updating them when needed, instead of starting over from toothpicks and double-sided duct tape. A large Lisp like Common Lisp is like a toolchain of decades-old tools that have proven their worth, and have been codified in standards, folklore, and implementations.

Now of course, that's also true of other languages. But in other languages, starting a new language is a from-scratch affair. And on the long way of parsing, analyzing, and interpreting or compiling language constructs, the creator of the new language invariably introduces a lot of things that are different from other languages, so every new non-Lisp language is often fundamentally different from other languages, in subtle areas such as control flow or scoping, which requires decades of fixing.

In Lisp, new tools are tried out as separate functions, macros, DSLs, subsystems, or, in the extreme case, code walkers or complete new implementations. The rest of the language stays the same. Even a new Lisp implementation will often share a lot of the design space with other Lisps. And that's the reason Lisp had proper lexical scoping for decades, while it's just become a fixture in new languages, and that's also why Scheme now has hygienic macros and phase separation, and other languages will have them in decades - because in Lisp, all of these new constructs can be developed in the Lisp fabric, without rebooting the process every time. Which turns out as a nice metaphor:

Others reboot, Lisp keeps running.

Friday, July 30, 2010

So true

Not only do I use multiple languages professionally, I don't know some of them. – anamax

Monday, July 26, 2010

Concurrency's Shysters

[C]oncurrency is still being used to instill panic in the uninformed. This time, it is chip-level multiprocessing (CMP) instead of SMP that promises to be the End of Days — and the shysters have taken a new guise in the form of transactional memory. The proponents of this new magic tonic are in some ways darker than their forebears: it is no longer enough to warn of Judgement Day — they must also conjure up notions of Original Sin to motivate their perverted salvation. “The heart of the problem is, perhaps, that no one really knows how to organize and maintain large systems that rely on locking” admonished Nir Shavit recently in CACM. (Which gives rise to the natural follow-up question: is the Solaris kernel not large, does it not rely on locking or do we not know how to organize and maintain it? Or is that we do not exist at all?)I know much too little about the subject to have an informed opinion, but I think countering hype in all its forms it important.

Sunday, July 25, 2010

Happenings in GCC-land

Tom Tromey is working on making GCC an incremental compiler: GCC will run as a server and maintain a model of the user's program. When a translation unit is recompiled, GCC will re-compile the minimum necessary. One of the goals is to make GCC a backend for IDEs that do stuff like autocompletion based on the program model (Interview, paper from GCC Summit).

People from Intel and others are working on transactional memory. Sections of code can be marked as atomic, and their temporary changes will be saved in thread-local storage. The semantics will make it appear as if transactions were protected by a single global lock.

It's a great time to be writing a Lisp->C compiler! :)

Efficient Method Dispatch in PCL

Some salient points:

They add a level of indirection between objects and their classes, called wrappers. An object points to a wrapper and that points to the class of the object. Inside each wrapper they store a random value for use by the memoization tables of generic functions.

Each generic function has a small memoization table that maps the hashed random value of a wrapper to the program counter (PC) of the corresponding method defined for the wrapper. The memoization tables use a simple random % memotablesize computation, and linear scanning after that.

How they handle class redefinition is swell: they simply set the wrapper's random value to zero, thereby invalidating the wrapper. They always leave the first entry of generic functions' memoization tables empty, and because an invalidated wrapper's random value will now hash to that empty entry, they only need to check for an invalid wrapper after a memoization miss occurs. Genius.

Generic functions run through a kind of state machine, depending on how they're used. (Similar to how polymorphic inline caches switch between mono-, poly-, and mega-morphic states, but with additional states tuned to CLOS semantics.) For example, if a generic function is only ever used with two different classes it can use a simple IF to test for these two classes. Here's another kicker: if a generic function's state machine detects that it's used as a slot accessor, it can directly store the slot offset instead of the method's PC in the memoization table. AWESOME.

Thursday, July 22, 2010

Piet

Sorting in ColorForth.

Piet is awesome and takes this to a whole new level! Here are some sample programs:

Prints "Piet".

A random number generator.

Tuesday, July 20, 2010

Proof that parsing is evil

total blank lines w/ nb, nc semi- preproc. fileAs DJB sez, don't parse.

lines lines comments lines colons direct.

--------+--------+--------+--------+--------+--------+----

1339 182 242 1072 666 25 lua-5.1.4/src/lparser.c

This is getting silly

Guess what's a goal for C# 5.0?

Tadaaaaaaaaaaaaaa....

Compiler as a service. Which is code for eval. Anders Hejlsberg shows it off in the great The Future of C# at 59:30.

Unbeknownst until just now to your correspondent, the JVM actually has a similar facility, but as Earl says, it's over-engineered to the brink of spontaneous self-combustion. (Love that quote.)

(I don't want to diss Java and the JVM too much, btw. I think it's a great platform for when you need to get something done. It's just not inspiring.)

CaaS is great on so many levels! Lispers will be able to claim another first on a vital technology. No future languages will be REPL-less.

My prediction for C# 6.0: Hygienic macros, quasisyntax, and phase separation. Seriously.

Monday, July 19, 2010

On Understanding Types, Data Abstraction, and Polymorphism

It starts off with a simple functional language, and then adds universal and existential quantification. Universal quantification (∀) brings parametric polymorphism ("length returns the length of any list, whatever the type of its contents are"). Existential quantification (∃) lets you hide the details of a data type ("there exists some data type that implements a stack, but I'm not telling you more"). All of this is described in terms of a set-based description of types, which clears up a lot of issues.

It seems that this paper is the granddaddy of papers about polymorphism. It's expository style is unmatched.

P.S. I discovered this paper while skimming Adrian Moors' thesis Type Constructor Polymorphism for Scala: Theory and Practice. Its final chapter gives a great overview of where type systems are going.

Thursday, July 15, 2010

What's phase separation and when do you want it? (Part 1)

My tip: if you want some Serious Insight™, write a compiler and follow the footsteps of modern Lisp compilation managers such as PLT Scheme's or XCVB. What's the difference between those and the rest of the pack?

Phase separation.

That probably doesn't ring a bell with you unless a) you've followed Scheme recently, or b) are writing your own Scheme, or c) are simply an all-around wizard. All of which are good for you!

Let me explain... Lisp, because it has macros that are written in a Turing-complete language (Lisp), is fundamentally a multi-phase language. We could also call it multi-time. Why? Because there's your ordinary runtime runtime, but before that happens, there's also a compile-time runtime. The right-hand sides of macro definitions run in the compile-time runtime. (I'll just call the

This is another concept, like hygiene, that is only now (or just recently) becoming understood by the peoples. Where by the peoples I mean your correspondent. (The concept has been debated at length on the wonderful r6rs-discuss list (sorry, I'm too lazy to dig up the threads). It's been discussed almost more than case sensitivity, which should give you an indication of how novel and controversial the topic is.) This is deep stuff. At first. And later, too.

Compile-Time

One fundamental feature of Lisp is that you get to extend the compile-time with your own code, macros. (Now, Tom Lord would say that's totally the wrong way to do it, but hey, that's the way it's been done the last couple decades, so I'm covering that.)

There's a bit of sneakiness in how macro calls look like function calls in Lisp when they're a totally different thing, and there are languages that make a syntactic difference between the two, but Lisp doesn't, and for the better.

The first fundamental thing to understand is that macros don't exist at runtime.

When you do a (defmacro foo () ...) in a Lisp with phase separation, there will be no object called FOO in the runtime. But where is it? It's in the compile-time.

Yeaaah.

I know, it's a seriously weird concept, but it's absolutely fundamental to grasp this: macros are compile-time entities, they don't exist at runtime.

So when and where is this compile-time? The when is easy: when you compile the file. (Of course it's not that easy, but let's leave it at that for now.)

The where is more tricky. A Lisp source file contains two kinds of stuff that are fundamentally different: macro definitions exist at compile-time, while the other stuff such as global variables and functions (obviously) exist at runtime. (That a source file describes multiple runtimes, the runtime runtime and the compile-time runtime, is one of those subtle, small things that add up to make Lisp the powerhouse that it is.)

That's where XCVB-and-SBCL (and PLT Scheme, and probably Chez, and probably others) come in with a shattering device: the CFASL.

CFASLs

Let's take a look at non-C, plain FASLs first.

A FASL (fast-load file) is the output that a Lisp compiler produces from a .lisp input file. If your compiler compiles to bytecode, the FASL contains bytecode. If your compiler compiles to machine code, the FASL contains machine code. If your compiler compiles to JavaScript, the FASL contains JavaScript. FASLs are fundamentally implementation-specific, but always contain some form of executable code which can be run on a (real or virtual) machine.

What's in a FASL? Say we have a Lisp file containing the following expressions:

(defun foo ()

(print "hello world!"))

(foo)

Then, the FASL will contain executable code that a) defines a new function called FOO, and b) calls that function, printing "hello world!" to the console. Simple.

Now what's in the FASL for the following Lisp file? Hint: not so simple.

(defmacro bar ()

`(print "hello meta-world!"))

(bar)

The FASL for that file will (gasp!) only contain executable code for (print "hello meta-world!"). The reason is that we're defining the macro BAR, and the (macro) call to BAR in the third line gets expanded at compile-time to the PRINT expression.

And that's where CFASLs come in. (The C stands for compiler, or compile-time.) The CFASL of the above Lisp file will contain (defmacro bar () `(print "hello meta-world!")).

See? The FASL contains the runtime expressions of the Lisp file, and the CFASL contains the compile-time expressions of the Lisp file, and never the twain shall meet.

Why, oh why?

Now, this is where it gets tricky. >:->

We have to distinguish the two ways in which an Acceptable Lisp™ needs to work: interactive REPL mode, and static file-compilation mode.

Let's start with the file-compilation mode, because it's simpler, and fachrissakes, let's not discuss module dependencies for now.

In file-compilation mode, the Lisp's job is dead simple: slurp in the source file, process it, and produce a FASL from the runtime expressions and a CFASL file from the compile-time expressions.

If we want to execute the resulting program, all we have to do is load the FASL. Loading means simply to execute it, and since it contains the runtime expressions of the input file, it will do whatever we told it to do. Note that we're not using the CFASL! Since the CFASL contains compile-time expressions, there's no need to even touch the CFASL when we want to run our program.

Now, for something a bit more challenging, the good ol' REPL mode.

So, we're at the REPL, feeling free, taking a sip, and sneaking a sneaky (defmacro quux () `(print "hello")) in there.

WTF? What's the Lisp supposed to do? After all, a macro definition is not a runtime expression!

Now, older Lisps, which are not so big on following the latest trends in the scene, or newer Lisps that just don't care, will get this wrong. They'll intermingle runtime and compile-time in the REPL, leading to all kinds of undesirable effects, as Matthew Flatt will be pleased to point out to you. This means you'll have the compile-time entity in your runtime, which is simply sick. (In this post's model; Tom Lord and John Shutt will tell you something totally different.) One of the bad results of that is that code that works at the REPL won't necessarily work when put into a file. But don't take my blahger's word for it, take Professor Flatt's peer-reviewed one.

So what's an Acceptable Lisp™ to do, at the REPL, when the luser enters a DEFMACRO? An Acceptable Lisp™ has to keep the runtime runtime and the compile-time runtime strictly separated. This means it will need one environment for the runtime, that contains the global variables and functions, and another, completely separate environment for the compile-time, that contains macro definitions.

Let's try to wrap this up...

Wrap-up

We've seen that a Lisp file contains code for two different times: the runtime runtime and the compile-time runtime. If we want to make our users' lives easier, we as Lisp implementors have to keep these times separate.

In Part II of this post, I'll write about how PLT Scheme handles module dependencies in such a phase-separated model.

Now I gotsta hack.

Wednesday, July 14, 2010

semantic, adj.

I propose to use the word semantic the same way for PLs.

If a language has wack semantics, you'd say ``Woah, that language is totally semantic!''

Monday, July 12, 2010

I, for one, welcome our new app-inventing grandchildren of Interlisp overlords

Outlining! 2D forms!

App Inventor for Android is based on OpenBlocks, which looks very much like Scratch, but uses Kawa instead of Squeak.

Sunday, July 11, 2010

Optimism

One of the things that stands out to me about APL, and CS in general is the pure, unfettered optimism concerning the human condition. What other field of human endeavor seeks to provide tools to enhance human productivety and intelligence, to augment the facutlties of the mind? The implicit assumption that this can be done is a leap of hope in the purist form.

"Tools for thinking" I mean who would think of such a thing? Seems like this goes unstated a lot of the time, I imagine most of us here take it for granted. But it really is an amazing proposition (imo).

Thursday, July 8, 2010

Using multiple kinds of parentheses in Lisp considered silly

I'm stealing a picture from that post though, to show something that bothers me:

Yeah, that WTF? is spot on, but for a different reason.

Note how a couple of unshapely ]'s have snuck their way into the midst of that lovely column of gorgeous )'s.

Now why is that WTF?-worthy? Well, one of the Big Benefits of using just two characters for all punctuation is the editing convenience you get from that. After you've moved code around in Lisp, you just keep your finger on the delete key until the superfluous parens are gone for good, or, else you just keep your finger on the )-key until the right amount of parens are where they should be.

This is completely broken when you use, for some irrational reason, a second or – gasp! – third kind of parenthesis. Doing that brings you back to the laughable world of lots of irrational and silly punctuation. You have to manually match up a [ with a ]. Think about that! It defeats one of the Big Benefits of using S-expressions, the only sane, rational syntax there is.

Silly.

(Of course, a lot of the time you use Emacs's sexp-aware killing and stuff, and then you can get away with multiple kinds of parens. But still, that doesn't work in the general case.)

Wednesday, July 7, 2010

C# 4.0: The industrial response to Lisp?

But more importantly, the features of the language really raise the bar for all dynlangs out there. With dynamic, C# effectively usurps untyped programming, while maintaining static types elsewhere. With LINQ's expression trees, they seem to tackle the same problem as Lisp does with S-expressions. And MSR's work on evolving generics, a lot of which seems to finds its way into C#, is highly interesting.

That C# qua language is way ahead of Java is clear. What's more worrying is that all dynlangs, including the few acceptable ones, like Common Lisp and Factor, are starting to look horribly dated against C#. Seriously.

Note the following quote by grandmaster Tim Sweeney:

In Bracha's work here, and in Microsoft's work on C# 3.0, I sense an undercurrent dragging the language model toward the LISP/Smalltalk "ideal" of metadata-intensive, introspective, dynamic, loosely-typeable programming programming. ... If you go this route, one day you'll realize you evolved the ultimate hacker language, and it became a godawful mess for writing real programs. (My emphasis)Evolving the ultimate hacker language, and making it a godawful mess for writing real programs, is of course the topic of this blog. Note also the following quote on C#'s type system, again by Sweeney:

These [Variance and Generalized Constraints for C# Generics] extensions stretch the C language family to an impressive new local optimaNow, I must say that I haven't ever used C#, so I don't know how it stacks up ITRW. I'm assuming, based on long observation, that Microsoft with high likelihood fucked up the pragmatics completely, and that programming in C#, as opposed to reading about it, is deeply depressing.

But what I'm saying is that the Perl-Python-Ruby-PHP-Tcl-Lisp-language has to catch up, maybe for the first time since 1959!